Current synaptic plasticity models have one decisive property which may not be biologically adequate, and which has important repercussions on the type of memory and learning algorithms in general that can be implemented: Each processing or transmission event is an adaptive learning event.

In contrast, in biology, there are many pathways that may act as filters from the use of a synapse to the adaptation of its strength. In LTP/LTD, typically 20 minutes are necessary to observe the effects. This requires the activation of intracellular pathways, often co-occurence of a GPCR activation, and even nuclear read-out.

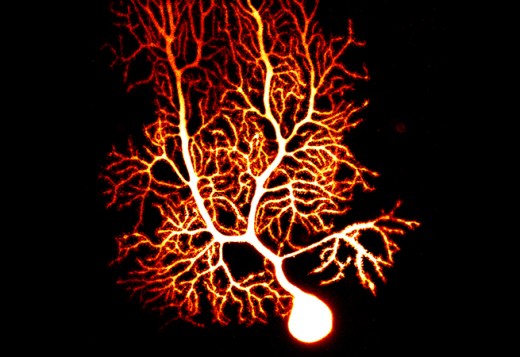

Therefore we have suggested a different model, greatly simplified at first to test its algorithmic properties. We include intrinsic excitability in learning (LTP-IE, LTD-IE). The main innovation is that we separate learning or adaptation from processing or transmission. Transmission events leave traces at synapses and neurons that disappear over time (short-term plasticity), unless they add up over time to unusually high (low) neural activations, something that can be determined by threshold parameters. Only if a neuron engages in a high (low) activation-plasticity event we get long-term plasticity at both neurons and synapses, in a localized way. Such a model is in principle capable of operating in a sandpile fashion. We do not know yet what properties the model may exhibit. Certain hypotheses exist, concerning abstraction and compression of a sequence of related inputs, and the development of an individual knowledge.