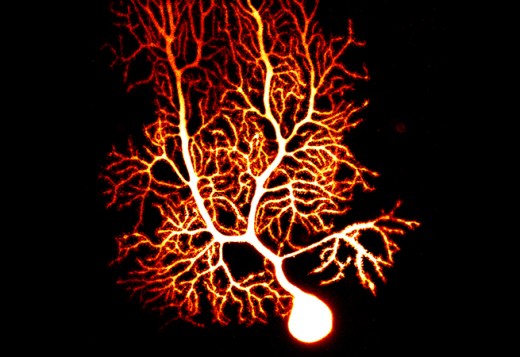

An important topic to understand intrinsic excitability is the distribution and activation of ion channels. In this respect the co-regulations between ion channels are of significant interest. MacLean et al. (2003) could show that overexpression of an A-type potassium channel by shal-RNA-injection in neurons of the stomatogastric ganglion of the lobster is compensated by upregulation of Ih such that the spiking behavior remained unaltered.

A non functional shal-mutant whose overexpression did not affect spiking had the same effect, which shows that the regulation does not happen at the site of the membrane, by measuring the spiking behavior. In this case, Ih was upregulated, even though IA activity was unaltered, and spiking behavior was increased. (This is in contrast to e.g. O’Leary et al., 2013, who assume homeostatic regulation of ion channel expression at the membrane, by spiking behavior.)

In drosophila-motoneurons the expression of shal and shaker – both responsible for IA – is reciprocally coupled. If one is reduced, the other is upregulated to a constant level of IA activity at the membrane. Other ion channels, like (INAp and IM) are again antagonistic, which means they correlate positively: if one is reduced, the other is reduced as well to achieve the same level of effect (Golowasch2014). There are a number of publications which have all documented similar effects, e.g. (MacLean et al., 2005, Schulz et al., 2007; Tobin et al., 2009; O’Leary et al., 2013).

We must assume that the expression level of ion channels is regulated and sensed inside the cell and that the levels of genes for different ion channels are coupled – by genetic regulation or on the level of RNA regulation.

To summarize: When there is high IA expression, Ih is also upregulated. When one gene responsible for IA is suppressed, the other gene is more highly expressed, to achieve the same level of IA expression. When (INap), a permanent sodium channel, is reduced, (IM), a potassium channel, is also reduced.

It is important to note that these ion channels may compensate for each other in terms of overall spiking behavior, but they have subtly different properties of activation, e.g. by the pattern of spiking or by neuromodulation. For instance, if cell A reduces ion channel currents like INap and IM, compensating to achieve the same spiking behavior, once we apply neuromodulation to muscarinic receptors on A, this will affect IM, but not INap. The behavior of cell A, crudely the same, is now altered under certain conditions.

To model this – other than by a full internal cell model – requires internal state variables which guide ion channel expression, and therefore regulate intrinsic excitability. These variables would model ion channel proteins and their respective interaction, and in this way guarantee acceptable spiking behavior of the cell. This could lead to the idea of an internal module which sets the parameters necessary for the neuron to function. Such an internal module that self-organizes its state variables according to specified objective functions could greatly simplify systems design. Instead of tuning systems parameters by outside methods – which is necessary for ion-channel based models – each neuronal unit itself would be responsible for its ion channels and be able to self-tune them separately from the whole system.

Linked to the idea of internal state variables is the idea of internal memory, which I have referred to several times in this blog. If I have an internal module of co-regulated variables, which set external parameters for each unit, then this module may serve as a latent memory for variables which are not expressed at the membrane at the present time (s. Er81). The time course of expression and activation at the membrane and of internal co-regulation need not be the same. This offers an opportunity for memory inside the cell, separated from information processing within a network of neurons.